The AAU-CRENAU laboratory offers a doctoral contract on situated visualization of a sensitive map of cities.

Actualité publiée le 9 avril 2020

Towards the situated visualization of urban sensitive cartography

Qualification by automatic learning of perception of urban space by pedestrians in situation to display additional information in Augmented Reality

Stakes, difficulties and objectives

Knowing background information in situation of urban mobility is a stake that depends on the time of action and purpose by urban users:

- 1/ upstream, e.g. designing sustainable and resilient cities,

- 2/ in situation, e.g. managing a health crisis,

- 3/ downstream, e.g. analyzing weather phenomena.

However, accessing this situated information remains complicated given the users’ mobility, given that they carry at best a lightweight device (mobile devices, usually) and considering the relationship between the information given, often made of spatial and time data, and the immediate context, which creates many attention-related disturbances (very specific sound or light alarms, for example).

It is therefore important to guarantee direct and in-situation access to this information by exploiting contextual elements at best.

Quantification of the perception of cities

In response to traditional mapping descriptions – where space is seen or graphically expressed as a whole and overhanging – many initiatives, especially regarding sensitive cartography, have arisen to express a submerged experience of territories (Olmedo, 2011). Urban design qualities, as proposed by (Ewing et al. 2009, Ewing et al. 2016, Clemente et al. 2005) take part in this movement, whose aim is to go beyond D-variables (“design, density, diversity, destination accessibility, and distance to transit”, excerpt from (Yin, 2017)) that are synthetic to “measure what cannot be measured” (Ewing et al. 2009) and quantify the perception of cities’ urban qualities as experienced by urban planning experts and pedestrians, considered as experts of “ordinary experiences” (Thibaud, 2010).

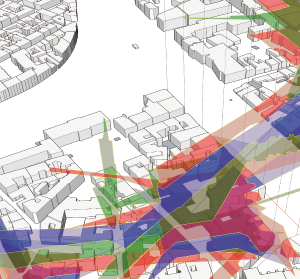

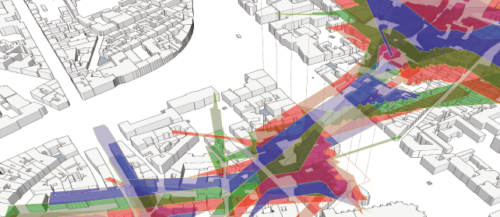

This attempt to objectify the fine characteristics (on a “micro scale”) of the street landscape that can condition the pedestrian experience as an urban design quality resulted in indicators (“imageability”, “enclosure”, “human scale”, “transparency” and “complexity”) and was modeled and simulated in geomatics (Yin, 2017).

Neural networks to identify urban qualities

Other research works have tried, with the use of artificial neural networks, to identify and measure these qualities with a large corpus of pedestrian views (Ye et al. 2019). Convolutional neural networks (CNN) can also help to calculate maps of urban salience (Huang et al. 2019).

Augmented reality as a mediation tool adapted to urban environments

However, as (Thomas et al. 2018) points out, the potential uses of quality indicators of urban design are of great interest when they are presented on site to professionals using the situated analysis approach, in particular when it is immersive in Augmented Reality (AR) (Whitlock et al. 2020).

Augmented Reality (AR) systems and algorithms have evolved and now allow (Grubert et al. 2017) to introduce the concept of Pervasive Augmented Reality (PAR), in which the system is constantly adapting to the user’s context to allow a prolonged use through several tasks with different aims. Without going as far as generalized AR systems, mobile AR already enables the on-site geovisualization of spatialized information (Devaux et al. 2018). Displaying situated information “enhances” the user’s perception of his or her immediate environment in relation to his standard task (Kjeldskov et al. 2013), the information displayed being adapted to the user’s environment and behavior as to not hinder his urban walk (Lages et al. 2019).

Hypotheses, contributions of the research and work plan

Whether they are extracted from real images or derived from simulations and digital models, we note that these urban “qualities” are neither compared nor combined at the present time. This comparison will be one of the first contributions of this work. It will be enhanced by a long line of specific works developed at the AAU laboratory regarding sensitive urban indicators and specifically the color of facades (Petit, 2015), urban aeraulics (Belgacem, 2019), geoclimatic areas and the vulnerability to urban overheating (Rodler & Leduc, 2019).

The urban salience maps, combined with the analysis of on-site perception in motion, will allow to identify salience areas where we can display relevant information in motion for the pedestrians.

In this work, based on the pedestrian’s on-site position, we will focus on what he or she perceives of his environment. To do that, we will link the information extracted from the images to the indicators calculated by simulation in virtual environments from simplified 3D maps of the city, with the help of neural networks to put the “perceived” information in a network of images into perspective with the calculated information on a 3D map of the same area in real time or based on pre-calculated information. These networks, to translate the pedestrian perceptions of urban space, will be induced in two ways: by means of interactions with urban planning professionals, but also by means of interactions with the “experts” of a place, i.e. the inhabitants. This digital and calculated perception of the city will give us access to the “important” areas that need to be highlighted in the pedestrian’s landscape and those that can be hidden. The two complementary expertise collections will allow to stress and share the two viewpoints, which will be another major stake of this work.

The positioning of characteristic elements in the city (such urban furniture) is akin to the spatial distribution of a set of “markers” enabling their localization regardless of other infrastructures when they are recognized (Antigny et al. 2018). The city can become both the interface and the zone of projection. This work through scales, the choice of data to display and the types of representation will allow, through mediation with urban data thanks to on-site localization, for an exchange of points of views between professionals and inhabitants.

In summary, the city seen by pedestrians becomes an interface of mediation between a calculation of “urban qualities” reflecting a perception and visualization of the same information or other localized information using AR to mediate with the data. It opens up new uses of such an interface to study both the general public and the professionals to head for a co-construction of urban space.

This thesis work is co-supervised by Myriam Servières (thesis director), Thomas Leduc and Vincent Tourre

Send a candidate file for this thesis

Candidates opened until: 24 april 2020

Download the subject in PDF

Important: funding is currently being evaluated.

Catégorie : AAU, CRENAU

Tags : Augmented Reality, CNN, computer vision, Sensitive visualization, urban design qualities